I am happy to be able to share about one of the things that I have been up to since I started working at Dayforce. What Is that thing?

Data Reliability Engineering

I had the opportunity to put in place a new functional area called Data Reliability Engineering. This may look good, but you may wonder what this thing is about.

Data Reliability Engineering (DRE) can be seen as a child of Site Reliability Engineering (SRE). The foundation of DRE is SRE. Organizationally speaking, we embedded DRE in the SRE organization at Dayforce.

DRE is SRE for Machine Learning and Data systems.

A DRE team focuses on, and is responsible for, ensuring that data pipelines, storage, and retrieval systems are reliable, robust, and scalable. It borrows principles from software engineering, DevOps, and site reliability engineering (SRE), to apply them to data-intensive systems.

The goal of the team is to ensure that data, which is a critical business asset, is consistently available, accurate, and timely available for different processes such as auditing, machine learning data training, analysis, and to different stakeholders such as data scientists, ML engineers, data analysts, etc.

A DRE team makes sure that the right Data Service-Level Indicators (DSLIs) are in place, that the Data Service-Level Objectives (DSLOs) and Agreements (DSLAs) are respected and constantly monitored. It also helps with the automation of the data movements, to increase the observability of the data pipelines and data systems, with the management of incidents incurring data availability and supporting teams with all the above.

Overall, it ensures that the data used to generate analytics reports, machine learning models or any Dayforce features is accurate, reliable, and available on time.

A data reliability engineer (DRE) is a professional responsible for implementing and managing data reliability engineering principles. They act as the guardians of data integrity and availability within the organization.

The DRE team act as trusted advisors for the company, actively participating in data platform infrastructure design and scalability considerations. It is responsible for implementing and managing data reliability engineering principles. It acts as the guardian of data integrity and availability within the organization.

Move Fast by Reducing the Cost of Failure

DRE helps teams to move fast by reducing the cost of failure of Machine Learning and Data projects. Some will say that it makes it a slow start, but it pays off in the long run. We focus on development velocity in the long term, not the short, burst of work to ship features.

DRE (and SRE) helps improve the product development output.

How? By reducing the MTTR (Mean Time To Repair). That way, developers will not have to waste time cleaning up after those issues. The further down the road we discover bugs to fix, the more expensive they are.

The reliability teams are not here to slow projects down, it is quite the opposite: they are here to improve their long-term velocity, while increasing their reliability.

Data Engineer vs. Data Reliability Engineer

Data Engineers are responsible for developing data pipelines and appropriately testing their code.

Data Reliability Engineers are responsible for supporting the pipelines in production by monitoring the infrastructure and data quality.

In other words, Data Engineering teams usually perform unit and regression tests that address known or predictable data issues before the code goes to production. DRE teams instrument the production environment to detect unknown problems before impacting the end-users.

What do we do?

DRE teams have the goal of setting and maintaining standards for the accuracy and the reliability of production data, while enabling velocity for data and analytics and machine learning engineers. The DRE team is more than just reacting to machine learning and data outages, they are in charge of preemptively identifying and fixing potential problems, and producing automated ways of testing and validating data, automatically detecting PII (Personal Identifiable Information) in different areas of the ecosystem, etc.

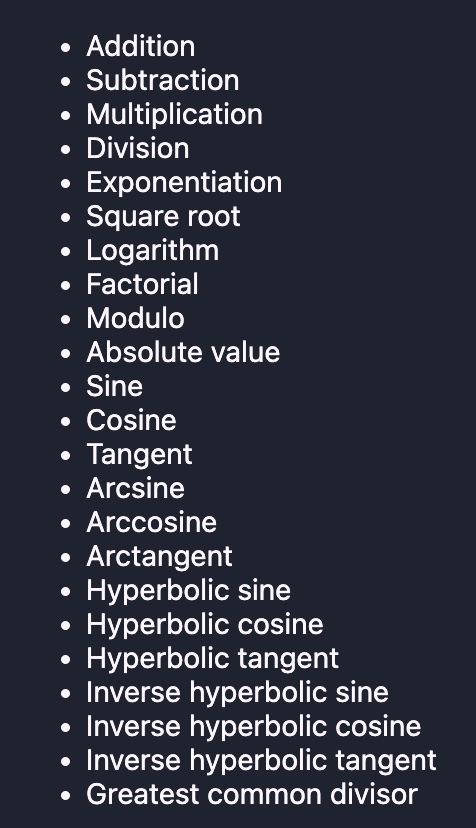

Areas that DREs would have purview over, include:

- Data lifecycle procedures (e.g., when, and how data gets deprecated)

- Data SLI (Service Level Indicator), Data SLA (Service Level Agreements), Data SLO (Service Level Objective) definition and documentation

- Data observability strategy and implementation

- Data pipeline code review and testing

- Helps with the automation of data movement

- Helps with the management of data incidents

- Data outage triage and response process

- Automating data related processes in the infrastructure to constantly remove toil

- Data ownership strategy and documentation

- Education and culture-building (e.g., internal roadshow to explain data SLAs)

- Developing guardrails around data processes to increase data reliability, availability, and privacy

- Monitoring costs of data activities (pipelines, storage, compute, network, etc.)

- Track the lineage of the data

- Perform change management when data tooling changes

- Ensure cross-team communication regarding data activities

- Ensure PII (Personal Identifiable Information) is properly handled in the data ecosystem

- Ensure the business is compliant with all regulations regarding data (i.e., GDPR, etc.)

- Ensure that the Machine Learning models are versioned, reproducible, evaluated, monitored and comply with overall software engineering best practices

DRE teams do not just put out fires. They put the guardrails in place to prevent the fires from happening. They enable agility for ML engineers, analytics engineers, and data scientists, keeping them moving quickly knowing that safety guards are in place to prevent changes to the data model from impacting production. Data teams are always balancing speed with reliability. The Data Reliability Engineer owns the strategies for achieving that balance.