| I am really proud to announce the release of the Open Semantic Framework version 3.0. This is a major milestone for the OSF platform and it includes important new features and improvements. |

|

The updated platform has just emerged from more than a year and a half of full-time development sponsored by one of Structured Dynamics‘ clients: Healthdirect Australia. OSF’s development as been highly influenced by the big enterprise requirements of the HDA sponsor, resulting in two portals to be fully operated by OSF: healthinsite and Pregnancy, Birth and Baby. OSF 3.0 is already in production with these two portals, but it will continue to constantly evolve in the coming months and years.

The OSF release is major in a number of ways. The first thing you will notice is that we re-branded the entire project, which includes all of its moving parts, around the OSF name. The OSF for Drupal (previously known as conStruct) was migrated to Drupal 7 and about 80% of its code was re-written. Seven new OSF Web Services (previously known as structWSF) were created. The old IP based security layer was completely replaced by a new key based security layer. A new revisioning system has been put in place to revision every record has it changes. A new caching layer has been added to the OSF Web Services to improve its performance and decrease the load on the other pieces of the OSF stack (about 80% of the non-search queries will hit the cache). A set of command line tools has been developed to help system administrators to manage and automate tasks on OSF instances. A set of system integration tests, which is composed of 746 tests and 4139 assertions, tests all of the functionalities of the system to make sure it is properly deployed on a server. The OSF Wiki has been completely rewritten and re-organized to help users and developers to find answers to their questions.

You can check the list of all the OSF 3.0 features, and the list of all the new features to OSF 3.0. Now let’s see what this new release is really all about.

[extoc]

The OSF (Open Semantic Framework) Brand

The first thing you will notice with this new OSF 3.0 release is that the whole project got re-branded around the OSF terminology. The Open Semantic Framework (OSF) stack is now composed of:

OSF Web Services

The OSF Web Services changed drastically since version 1.1. Most of its code got re-written, a new structure has been put in place, new features and new web service endpoints got created, etc. In this section, we will cover what changed in the OSF Web Services and what are these new features.

New Security Layer

Initially, we created a simple and effective security layer for the OSF Web Services. It was based on the IP of the requester, nothing more, nothing less. That was five years ago. This simple security layer was quite effective, but it was a nightmare to manage.

What we did for OSF 3.0 is to ditch this old security layer, and to replace it by something secure and much easier to manage.

The new security layer does two things:

- Validates the web service call

- Validates the data access of the user

To validate the web service call, the new security system uses a secret keys authentication system. Every HTTP query that is sent to any web service endpoint needs to comply with the security protocol. If it doesn’t, then the requests will be refused.

Then if the call is authenticated, the web service endpoint will make sure that the requesting user has proper access to the datasets that are being queried. This second authentication step makes sure that the user can only access the data to which he has access rights.

The real improvement of the new security layer is how the users are managed. In the past, we were managing individual IP addresses. Now, we are managing groups of users. All dataset access permissions to records are related to a group. Each group is composed of one or multiple users. Then, when a web service endpoint checks if a requesting user does have access to the content of a certain dataset, it checks if the requesting users belong to a group that has access to the content of that dataset.

It is now much easier to manage groups of users at the level of the dataset than individual IP addresses.

New Revisioning System

A new records revisioning system is now available in OSF. If required, every change to a record can be revisioned. This means that if someone makes an error when editing a record, all changes can be roll-backed at anytime using the new revisioning system.

A new set of web service endpoints has been created to manage the revisions. You can list, read, update, delete, and compare revisions with these new endpoints.

New Web Service Endpoints

A series of new web service endpoints have been created:

Multi-Language Support

All of the web services that create, update or read data from OSF now have multi-lingual capabilities. If you are creating data, the only thing you have to do is to specify the language for each literal you are defining in the RDF documents you are indexing in OSF. If you are reading or searching data, you only have to specify the language you want to use for each web service query you are creating.

New Caching Layer

OSF is a stack that includes a multitude of underlying systems such as Virtuoso, Solr, OWLAPI, GATE, etc. Depending on the web service endpoints that are used, and depending on how they are used, the same query can be requested again and again, and each of these background services may be queried again and again too.

To improve the performances of each of the OSF Web Services, and to minimize the usage of these underlying systems as much as possible, we added a caching layer at the level of the web service endpoints. The result is that every OSF Web Services query is being cached into the caching layer. This means that every time that the same query is being requested twice, the second time the results will come from the caching layer.

The caching system that is used by OSF is Memcached. More information about the OSF Cache can be read on the OSF Wiki.

Improved Search

The Search web service endpoint, which is by far the most used OSF web service endpoint, also improved quite a lot in developing this new version.

First, the Search endpoint is now using the eDisMax query parser. In itself, this changes everything in the endpoint and leads to the creation of multiple new search functionalities.

It is now possible to change the ranking of the search results by boosting the scoring of the results based on different things such as their dataset provenance, their types or any of their attribute/values. This enables the possibility to improve the quality of the results returned on a OSF web portal depending on the context of a search and the semantics of the records being searched.

It is also now possible to add restrictions to the search queries. This means that search keywords will be restricted to a set of attributes. Then it is also possible to boost the scoring of the returned results depending on where the search keywords appeared.

There is a new spell-checker function for the search queries. This means that if no results are returned for a specific search query, then the system will return a series of possible keywords that the user may want to use to re-initiate the search query.

Finally, an extended search query syntax is now supported by the Search endpoint. This enables more complex search queries to be sent to the Search endpoint, opening the door to the creation of more complex contextual search profiles queries.

New Interfacing Mechanism

A new interface mechanism as been put in place for the OSF Web Services. An interface is a the code that is run by the web service endpoint for a given query.

An interface cocorresponds to a specific version of a web service endpoint. Two different interfaces, for the same endpoint, may comply to different versions of its API. However, these two interfaces can work side-by-side using the same data.

If two interfaces comply to the same endpoint API, it means that their processing of the query will be different (like querying Solr 4.0 instead of 3.6). If two interfaces don’t comply to the same endpoint API version, then it means that each interface supports different versions of the endpoint.

This new interfacing mechanism becomes handy to support more than one triple store, or when the same OSF instance needs to use different Solr query parsers, or when some of the endpoints have to be backward compatible for some portals/users that still need to be supported by the OSF instance, etc.

The new interfacing mechanism gives the flexibility to be able to run different code or support different web service API version on the same OSF instance.

OSF for Drupal

OSF for Drupal now runs on Drupal 7. About 80% of the Drupal-related code got rewritten and we can now state that OSF is fully integrated into Drupal.

Drupal Connectors

A series of OSF connectors have been developed in the last year and a half that basically let Drupal’s core features use OSF instead of MySQL: Entity & Entity API, FieldAPI & FieldStorage and the SearchAPI. These connectors mean that if OSF for Drupal is installed and configured on a Drupal 7 instance, developers will be able to use these core APIs to query registered decentralized OSF instances instead of local MySQL/Solr instances.

OSF Entities

The OSF Entities connector module implements the Drupal Entity API. This means that if OSF for Drupal is properly installed and configured on a Drupal instance, that the Entity API can be used to read, create, update and delete content from registered external OSF Web Services networks. Under this scenario, no information about these Drupal entities will be local to the Drupal instance. All of the content will be hosted externally on a dedicated OSF instance. All of the data manipulated by the Entity API is RDF data. What that means is that the Entity API now may interface with an RDF data management system, with communications with it via web service endpoint queries.

In short, this connector makes OSF records visible to Drupal via the Entity API.

OSF FieldStorage

The OSF FieldStorage connector module creates a new FieldStorage type that enables Drupal users to save Drupal content into an OSF instance instead of saving the content in the default storage system (namely MySQL). This means that if someone starts using OSF instead as the backend of a Drupal portal, then all the Drupal content that will be created will be available via the OSF web service endpoints. This means that other external applications that know how to talk to OSF web service endpoints are now able to leverage the content that has been created from the Drupal instance. Also, all of the content will be available as RDF.

What this connector does at the end is to save Drupal entities into OSF instead of in the default storage system (MySQL).

OSF SearchAPI

The OSF SearchAPI connector module creates a new service for the SearchAPI module. It enables the SearchAPI to send search queries to an OSF Search web service endpoint instead of the default search service. This means that the Drupal search engine is now fully powered by the OSF Search endpoint, and gives access to all the datasets hosted on one, or multiple, remote OSF instances.

Better Configuration & Management

Registering, configuring and managing OSF instances and datasets into Drupal has never been easier. The new OSF Configure module is a new module that centralizes all of the features and options that are required to register, configure and maintain OSF instances and datasets.

QueryBuilder & Search Profiles

A new kind of tool has been developed in OSF for Drupal 3.x: OSF Search Profiles. A search profile is a predefined search query where its search results are displayed in a block positioned on some Drupal pages. These search profiles are normally used to display lists of information that match a search query. Search profiles are also to some extent aware of their context. For example, if the main topic of a page is about cancer and if we have a search profile that displays a list of events, then when the search profile is used in the context of that page about cancer, then cancer related events should be displayed. That is one of the core purposes of the search profiles.

The search profiles’ underlying search queries are being created using the new OSF Query Builder module. This powerful user interface enables site administrators to create complex search queries that will be used within a search profile.

OSF Web Services PHP API

In prior versions, knowing how to query the OSF web services was not an easy task. It is the reason why the OSF Web Services PHP API was developed: to help developers to easily query OSF web service endpoints. This PHP API is a set of classes where each of them has a series of methods that can be used to query a particular web service endpoint. Let’s take this example of some OSF WS PHP API code that does send a query to the OSF Search web service endpoint:

[cc lang=’php’ line_numbers=’false’]

[raw]

//

// Step #1: Instantiate the class of the web service then want to query

//

// Create the SearchQuery object

$search = new SearchQuery(‘http://localhost/ws/’, ‘some-app-id’, ‘some-api-key’, ‘http://localhost/users/foo’);

//

// Step #2: Define all the parameters/features/behaviors of the web service by invoking different methods of the class

//

$resultset = $search->enableInference

->excludeAggregates()

->items(20)

->page(40)

->query(“forest”)

->send()

->getResultset();

// Print the PHP array serialization for that resultset

print_r($resultset->getResultset());

[/raw]

[/cc]

OSF Management Tools

A new set of command line tools have been developed for OSF version 3.0. These tools’ focus has been to help OSF instance administrators by giving them command line tools that they could use in their scripts, Cron jobs, or any other middleware toolings that may perform different tasks on a OSF instance.

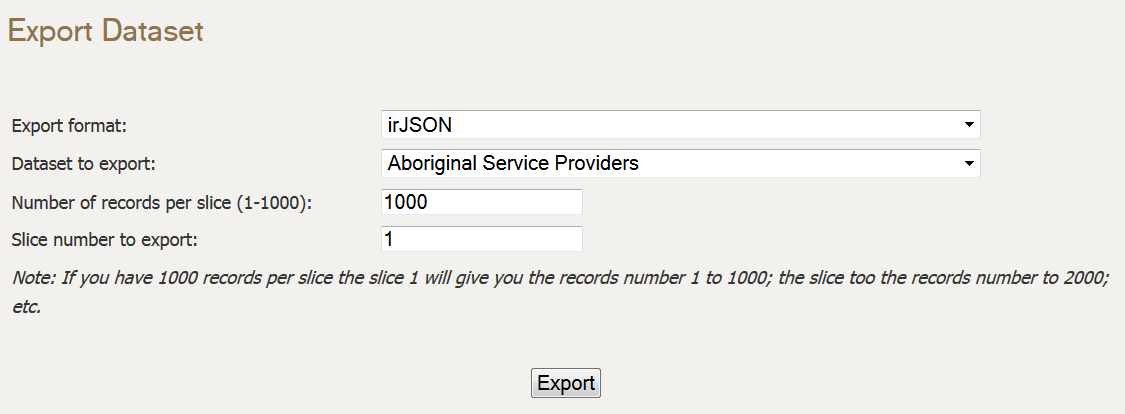

Datasets Management Tool

The Datasets Management Tool (DMT) is a command line tool used to manage datasets of a OSF instance. With this tool, you may create, delete, update, import and export datasets directly from the command line.

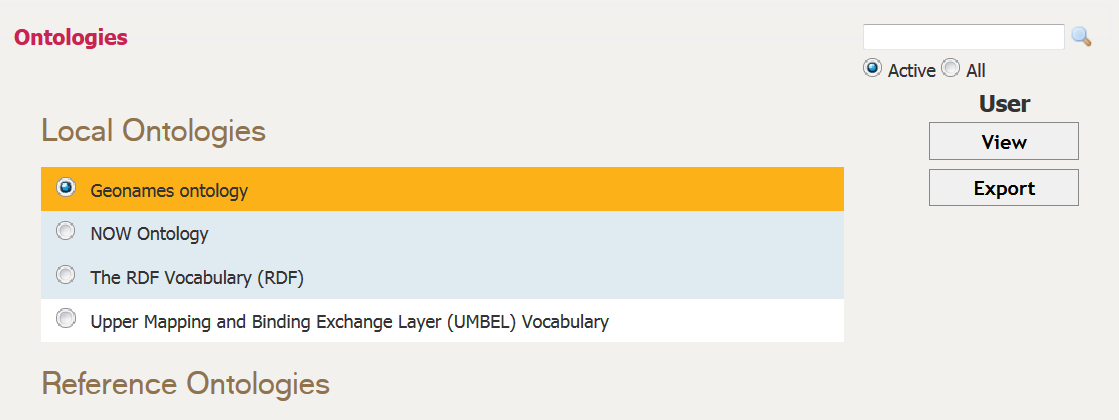

Ontologies Management Tool

The Ontologies Management Tool (OMT) is a command line tool used to manage ontologies of a OSF Web Services network instance. It can be used to list the ontologies of a OSF Web Services instance, to manage those ontologies, to create/import new ones, to delete existing ones, and to generate underlying ontological structures.

Permissions Management Tool

The Permissions Management Tool (PMT) is a command line tool used to manage access permissions on a OSF Web Services network instance. This tool is used to list, create and delete access permissions, groups and users.

Data Validator Tool

The Data Validator Tool (DVT) is a command line tool used to perform a series of post-indexation data validation tests. What this tool does is to run a series of pre-configured tests, and return validation errors if any are found.

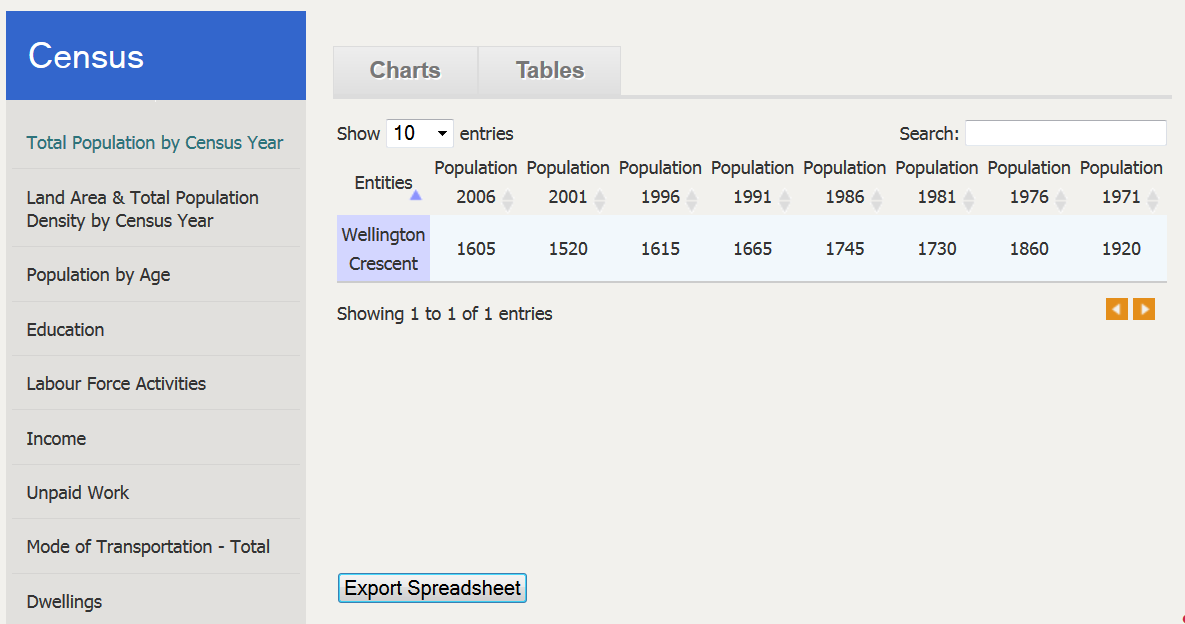

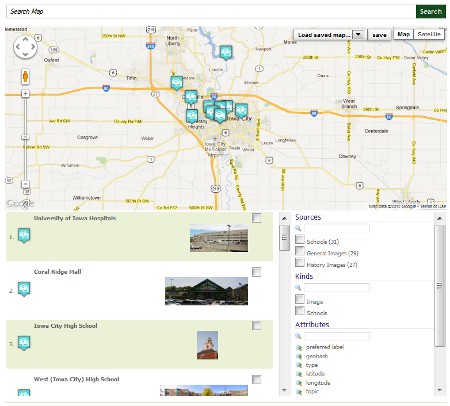

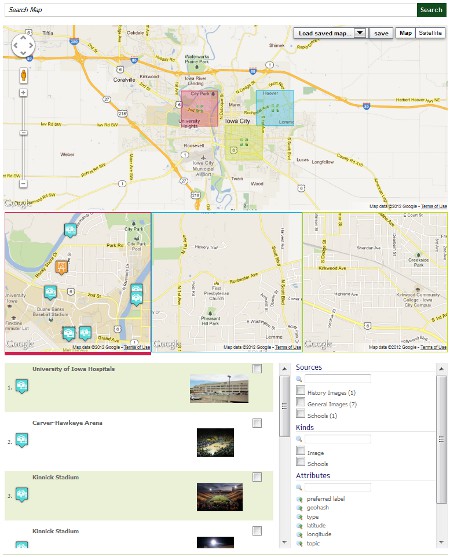

OSF Widgets

All the OSF Widgets (formerly the Semantic Components) have been updated to work with OSF 3.0. The big difference with this update is that all of the OSF Widgets now have access to an OSF for Drupal proxy. This proxy enables them to communicate with a OSF Web Services instance without having to authenticate themselves to the endpoints.

OSF Wiki

The OSF Wiki has been completely rewritten and re-organized. It is the go-to place to find more information about the Open Semantic Framework project, and all pieces of the stack.

Installing and Configuring OSF

OSF Installer

Installing and configuring OSF has never been easier to do. The OSF Installer utility has been improved to ease the deployment of OSF on a new Ubuntu 12.10 server. The installation tool will install and configure all the pieces required by the OSF stack. Once everything is installed and configured, it will run the OSF Tests Suites to make sure that all the OSF functionalities are fully operational on the new server.

Then, once the OSF stack is installed, the user is then able to use the OSF Installer tool to install, deploy and configure Drupal 7 with OSF for Drupal.

OSF EC2

Additionally, we created a new public Amazon AWS EC2 image that includes the full OSF stack version 3.0. This new public image is available in all the zones:

| Region |

arch |

root store |

AMI |

| us-east-1 |

64-bit |

EBS |

ami-afe4d1c6 |

| us-west-1 |

64-bit |

EBS |

ami-d01b2895 |

| us-west-2 |

64-bit |

EBS |

ami-c6f691f6 |

| eu-west-1 |

64-bit |

EBS |

ami-883fd4ff |

| sa-east-1 |

64-bit |

EBS |

ami-6515b478 |

| ap-southeast-2 |

64-bit |

EBS |

ami-4734ab7d |

| ap-southeast-1 |

64-bit |

EBS |

ami-364d1a64 |

| ap-northeast-1 |

64-bit |

EBS |

ami-476a0646 |

Once you create a new instance from that image, you will have to properly configure it to make it secure and fully operational. The only thing you have to do is to follow the steps outlined in the Creating and Configuring an Amazon EC2 AMI OSF Instance manual.

System Integration Tests

A complete suite of integration tests has been created for OSF 3.0. The tests suites are composed of 746 tests and 4139 assertions. These integration tests make sure that all of the functionality of an OSF instance is working. These tests are run every time an OSF instance is deployed using the OSF Installer script. Then, they can be re-run anytime thereafter. Normally, every time an update is made on an OSF instance, the tests should be run as well to make sure that the update didn’t break anything.

These tests are testing:

- All of the input parameters of each endpoint

- All of the combinations of all the input parameters of each endpoint

- All of the mime types supported by each endpoint

- All of the expected error returned by each endpoint.

Conclusion

We have been working on this new Open Semantic Framework version 3.0 for almost two full years now. We have been quiet during that time since we had no more time other than coding, documenting, testing and deploying the code that we are releasing today.

This new version is a major leap forward for the Open Semantic Framework open source project. Five years ago, Mike and I set as a goal to have a complete OSF stack in place that could be leveraged by anybody to fulfill the requirement of any kind of projects. I think that with this OSF version 3.0, we reached the middle term goal that we fixed for ourselves 5 years ago.