I recently started to investigate different ways to serialize RDF triples using Clojure code . I had at least two goals in mind: first, ending up with an RDF serialization format that is valid Clojure code and that could easily be manipulated using core Clojure functions. The second goal was to be able to “execute” the code to validate the data according to the semantics of the ontologies used to define the data.

This blog post focuses on showing how the second goal can be implemented.

Before doing so, let’s take some time to explore what the sayings of ‘Code as Data' and ‘Data as Code' may mean in that context.

Code as Data, Data as Code

What is Code as Data? It means that the program code you write is also data that can be manipulated by a program. In other words, the code you are writing can be used as input [to a macro], which can then be transformed and then evaluated. The code is considered to be data to be manipulated by a macro system to output executable code. The code itself becomes data that can be manipulated with some internal mechanism in the language. But the result of these manipulations is still executable code.

What is Data as Code? It means that you can use a programming language’s code to embed (serialize) data. It means that you can specify your own sublanguage (DSL), translate it into code (using macros) and execute the resulting code.

The initial goal of a RDF/Clojure serialization is to specify a way to write RDF triples (data) as Clojure (code). That code is data that can be manipulated by macros to produce executable code. The evaluation of the resulting code is the validation of the data structures (the graph defined by the triples) according to the semantics defined in the ontologies. This means that validating the graph may also occur by evaluating the resulting code (and running the functions).

Ontology Creation

In my previous blog posts about serializing RDF data as Clojure code, I noted that the properties, classes and datatypes that I was referring to in those blog posts were to be defined elsewhere in the Clojure application and that I would cover it in another blog post. Here it is.

All of the ontology properties, classes and datatypes that we are using to serialize the RDF data are defined as Clojure code. They can be defined in a library, directly in your application’s code or even as data that gets emitted by a web service endpoint that you evaluate at runtime (for data that has not yet been evaluated).

In the tests I am doing, I define RDF properties as Clojure functions; the RDF classes and datatypes are normal records that comply with the same RDF serialization rules as defined for the instance records.

Some users may wonder: why is everything defined as a map but not the properties? Though each property’s RDF description is available as a map, we use it as Clojure meta-data for that function. We consider that properties are functions and not a map. As you will see below, these functions are used to validate the RDF data serialized in Clojure code. That is the reason why they are represented as Clojure functions and not as maps like everything else.

Someone could easily leverage the RDF/Clojure serialization without worrying about the ontologies. He could get the triples that describes the records without worrying about the semantics of the data as represented by the ontologies. However, if that same person would like to reason over the data that is presented to him — if he wants to make sure the data is valid and coherent –then he will require the ontologies descriptions.

Now let’s see how these ontologies are being generated.

Creating OWL Classes

As I said above, an OWL class is nothing but another record. It is described using the same rules as previously defined. However, it is described using the OWL language and refers to a specific semantic. Creating such a class is really easy. We just have to follow the semantics of the OWL language, and the rules of RDF/Clojure serialization. For example, take this example that creates a simple FOAF person class:

[cc lang=’lisp’ line_numbers=’false’]

[raw](def foaf:+person

“The class of all the persons.”

{#’uri “http://xmlns.com/foaf/0.1/Person”

#’rdf:type #’owl:+class

#’rdfs:label “Person”

#’rdfs:comment “The class of all the persons.”})[/raw]

[/cc]

As you can see, we are describing the class the same way we were defining normal instance records. However, we are doing it using the OWL language.

Creating OWL Datatypes

Datatypes are also serialized like normal RDF/Clojure records; that is, just like classes. However, since the datatypes are fairly static in the way we define them, I created a simple macro called gen-datatype that can be used to generate datatypes:

[cc lang=’lisp’ line_numbers=’false’]

[raw](defmacro gen-datatype

“Create a new datatype that represents a OWL datatype class.

[name] is the name of the datatype to create.

Optional parameters are:

[:uri] this is the URI of the datatype to create

[:base] this is the URI of base XSD datatype of this new datatype

[:pattern] this is a regex pattern to use to use to validate that

a given string represent a value that belongs to that datatype

[:docstring] the docstring to use when creating this datatype”

[name & {:keys [uri base pattern docstring]}]

`(def ~name

~(str docstring)

(merge {#’rdf:type “http://www.w3.org/TR/rdf-schema#Datatype”}

(if ~uri {#’rdf.core/uri ~uri})

(if ~pattern {#’xsp:pattern ~pattern})

(if ~base {#’xsp:base ~base}))))[/raw]

[/cc]

You can use this macro like this:

[cc lang=’lisp’ line_numbers=’false’]

[raw](gen-datatype *full-us-phone-number

:uri “http://purl.org/ontology/foo#phone-number”

:pattern “^[0-9]{1}-[0-9]{3}-[0-9]{3}-[0-9]{4}$”

:base “http://www.w3.org/2001/XMLSchema#string”

:docstring “Datatype representing a phone US phone number”)

[/raw]

[/cc]

And it will generate a datatype like this:

[cc lang=’lisp’ line_numbers=’false’]

[raw]{#’ontologies.core/xsp:base “http://www.w3.org/2001/XMLSchema#string”

#’ontologies.core/xsp:pattern “^[0-9]{1}-[0-9]{3}-[0-9]{3}-[0-9]{4}$”

#’rdf.core/uri “http://purl.org/ontology/foo#phone-number”

#’ontologies.core/rdf:type “http://www.w3.org/TR/rdf-schema#Datatype”}[/raw]

[/cc]

What this datatype defines is a class of literals that represents the full version of an US phone number. I will explain how such a datatype is used to validate RDF data records below.

Creating OWL Properties

Properties are different from classes and datatypes. They are represented as functions in the RDF/Clojure serialization. I created another simple macro called gen-property to generate these OWL properties:

[cc lang=’lisp’ line_numbers=’false’]

[raw](defmacro gen-property

“Create a new property that represents a OWL property.

[name] is the name of the property/function to create. This is the name that will be

used in your Clojure code.

[:uri] this is the URI of the property to create

[:description] this is the description of the property to create

[:domain] this is the domain of the URI to create. The domain is represented by one or multiple

classes that represent that domain. If there is more than one class that represent the domain

you can specify the ^intersection-of or the ^union-of meta-data to specify if the classes

should be interpreted as a union or an intersection of the set of classes.

[:range] this is the range of the URI to create. The range is represented by one or multiple

classes that represent that range. If there is more than one class that represent the range

you can specify the ^intersection-of or the ^union-of meta-data to specify if the classes

should be interpreted as a union or an intersection of the set of classes.

[:sub-class-of] one or multiple classes that are super-classes of this class

[:equivalent-property] one or multiple classes that are equivalent classes of this class

[:is-object-property] true if the property being created is an object property

[:is-datatype-property] true if the property being created is a datatype property

[:is-annotation-property] true if the property being created is an annotation property

[:cardinality] cardinality of the property”

[name & {:keys [uri

label

description

domain

range

sub-property-of

equivalent-property

is-object-property

is-datatype-property

is-annotation-property

cardinality]}]

(let [vals (gensym “label-“)

docstring (if description

(str description “.\n [” vals “] is the preferred label to specify.”)

(str “”))

type (if is-object-property

#’owl:+object-property

(if is-annotation-property

#’owl:+annotation-property

#’owl:+datatype-property))

metadata (merge (if uri {#’rdf.core/uri uri})

(if type {#’rdf:type type})

(if label {#’iron:pref-label label})

(if description {#’iron:description description})

(if range {#’rdfs:range range})

(if domain {#’rdfs:domain domain})

(if cardinality {#’owl:cardinality cardinality}))]

`(defn ~(with-meta name metadata)

~(str docstring)

[~vals]

(rdf.property/validate-property #’~name ~vals))))[/raw]

[/cc]

Note that this macro currently only accommodates a subset of the OWL language. For example, there is no way to use the macro to specify cardinality, etc. I only created what was required for writing this blog post.

You can then use this macro to create new properties like this:

[cc lang=’lisp’ line_numbers=’false’]

[raw](gen-property foo:phone

:is-datatype-property true

:label “phone number”

:uri “http://purl.org/ontology/foo#phone”

:range *full-us-phone-number

:domain #’owl:+thing

:cardinality 1)

(gen-property foo:knows

:is-object-property true

:label “a person that knows another person”

:uri “http://purl.org/ontology/foo#knows”

:range #’umbel.ref/umbel-rc:+person

:domain #’umbel.ref/umbel-rc:+person)

[/raw]

[/cc]

Some other Classes, Datatypes and Properties

So, here is the list of classes, datatypes and properties that will be used later in this blog post for demonstrating how validation occurs in such a framework:

[cc lang=’lisp’ line_numbers=’false’]

[raw](in-ns ‘rdf.core)

(defn uri

[s]

(try

(URI. #^String s)

(catch Exception e

(throw (IllegalStateException. (str “Invalid URI: \”” s “\””))))))

(defn datatype

[s]

(if (var? s)

(if (not= (get @s #’ontologies.core/rdf:type) “http://www.w3.org/TR/rdf-schema#Datatype”)

(throw (IllegalStateException. (str “Provided value for datatype is not a datatype: \”” s “\””))))

(throw (IllegalStateException. (str “Provided value for datatype is not a datatype: \”” s “\””)))))

(in-ns ‘ontologies.core)

(gen-property iron:pref-label

:uri “http://purl.org/ontology/iron#prefLabel”

:label “Preferred label”

:description “Preferred label for describing a resource”

:domain #’owl:+thing

:range #’rdfs:*literal

:is-datatype-property true)

(def owl:+thing

“The class of OWL individuals.”

{#’uri “http://www.w3.org/2002/07/owl#Thing”

#’rdf:type #’rdfs:+class

#’rdfs:label “Thing”

#’rdfs:comment “The class of OWL individuals.”})

(gen-datatype xsd:*string

:uri “http://www.w3.org/2001/XMLSchema#string”

:docstring “Datatypes that represents all the XSD strings”)

[/raw]

[/cc]

Concluding with Ontologies

Ontologies are easy to write in RDF/Clojure. There is a simple set of macros that can be used to help create the ontology classes, properties and datatypes. However, in the future I am anticipating to create a library that would use the OWLAPI to take any OWL ontology and to serialize it using these rules. The output could be Clojure code like this, or JAR libraries. Additionally, some investigation will be done to use more Clojure idiomatic projects like Phil Lord’s Tawny-OWL project.

RDF Data Instantiation Using Clojure Code

Now that we have the classes, datatypes and properties defined in our Clojure application, we can start defining data records like this:

[cc lang=’lisp’ line_numbers=’false’]

[raw](def valid-record (r {uri “http://foo-bar.com/test/”

rdf:type owl:+thing

foo:phone [“1-421-353-9057”]

iron:pref-label {value “Test cardinality validation”

lang “en”

datatype xsd:*string}}))

[/raw]

[/cc]

Data Validation

Now that we have all of the ontologies defined in our Clojure application, we can start to define records. Let’s start with a record called valid-record that describes something with a phone number and a preferred label. The data is there and available to you. Now, what if I would like to do a bit more than this, what if I would like to validate it?

Validating such a record is as easy as evaluating it. What does that mean? It means that each value of the map that describes the record will be evaluated by Clojure. Since each key refers to a function, then evaluating each value means that we evaluate the function and use the value as specified by the description of the record. Then we iterate over the whole map to validate all of the triples.

To perform this kind of process, we can create a validate-resource function that looks like:

[cc lang=’lisp’ line_numbers=’false’]

[raw](defn validate-resource [resource]

(doseq [[property value] resource]

(do (println (str “validating resource property: ” property))

(if (fn? @property)

(@property value)))))

[/raw]

[/cc]

You can use it like this:

[cc lang=’lisp’ line_numbers=’false’]

[raw](validate-resource valid-record)[/raw]

[/cc]

If no exceptions are thrown, then the record is considered valid according to the ontology specifications. Easy, no? Now let’s take a look at how this works.

If you check the gen-property macro, you will notice that every time a function is evaluated, the #'rdf.property/validate-property function is called. What this function does is to perform the validation of the property given the specified value(s). The validation is done according to the description of the property in the ontology specification. Such a validate-property looks like:

[cc lang=’lisp’ line_numbers=’false’]

[raw](defn validate-property

“Validate that the values of the property are valid according to the description of that property

[property] should be the reference to the function, like #’foo-phone

[values] are the actual values of that property”

[property values]

(do

(validate-owl-cardinality property values)

(validate-rdfs-range property values)))

[/raw][/cc]

So what it does is to run a series of other functions to validate different characteristics of a property. For this blog post, we demonstrate how the following characteristics are being validated:

- Cardinality of a property

- URI validation

- Datatype validation

- Range validation when the range is a class.

Cardinality Validation

Validating the cardinality of a property means that we check if the number of values of a given property is as specified in the ontology. In this example, we validate the exact cardinality of a property. It could be extended to validate the maximum and minimum cardinalities as well.

The function that validates the cardinality is the validate-owl-cardinality function that is defined as:

[cc lang=’lisp’ line_numbers=’false’]

[raw](defn validate-owl-cardinality

[property values]

(doseq [[meta-key meta-val] (seq (meta property))]

; Only validate if there is a owl/cardinality property defined in the metadata

(if (= meta-key #’ontologies.core/owl:cardinality)

; If the value is a string, a var or a map, we check if the cardinality is 1

(if (or (string? values) (map? values) (var? values))

(if (not= meta-val 1)

(throw (IllegalStateException.

(format “CARDINALITY VALIDATION ERROR: property %s has 1 values and was expecting %d values” property meta-val))))

; If the value is an array, we validate the expected cardinality

(if (not= (count values) meta-val )

(throw (IllegalStateException.

(format “CARDINALITY VALIDATION ERROR: property %s has %d values and was expecting %d values” property (count values) meta-val))))))))[/raw]

[/cc]

For each property, it checks to see if the owl:cardinality property is defined. If it is, then it makes sure that the number of values for that property is valid according to what is defined in the ontology. If there is a mismatch, then the validation function will throw an exception and the validation process will stop.

Here is an example of a record that has a cardinality validation error as defined by the property (see the description of the property below):

[cc lang=’lisp’ line_numbers=’false’]

[raw](def card-validation-test (r {uri “http://foo-bar.com/test/”

rdf:type owl:+thing

foo:phone [“1-421-353-9057” “(1)-(412)-342-3246”]

iron:pref-label {value “Test cardinality validation”

lang “en”

datatype xsd:*string}}))[/raw]

[/cc]

[cc lang=’lisp’ line_numbers=’false’]

[raw]user> (validate-resource card-validation-test)

IllegalStateException CARDINALITY VALIDATION ERROR: property #’dataset-test.core/foo:phone has 2 values and was expecting 1 values rdf.property/validate-owl-cardinality (property.clj:36)[/raw]

[/cc]

URI Validation

Everything you define in RDF/Clojure has a URI. However, not every string is a valid URI. All of the URIs you may define can be validated as well. When you define a URI, you use the #'rdf.core/uri function to specify the URI. That function is defined as:

[cc lang=’lisp’ line_numbers=’false’]

[raw](defn uri

[s]

(try

(URI. #^String s)

(catch Exception e

(throw (IllegalStateException. (str “Invalid URI: \”” s “\””))))))[/raw]

[/cc]

As you can see, we are using the java.net.URI function to validate the URI you are defining for your records/classes/properties/datatypes. If you make a mistake when writing a URI, then a validation error will be thrown and the validation process will stop.

Here is an example of a record that has an invalid URI:

[cc lang=’lisp’ line_numbers=’false’]

[raw](def uri-validation-test (r {uri “-http://foo-bar.com/test/”

rdf:type owl:+thing

foo:phone “1-421-353-9057”

iron:pref-label {value “Test URI validation”

lang “en”

datatype xsd:*string}}))[/raw]

[/cc]

[cc]

[raw]user> (validate-resource uri-validation-test)

IllegalStateException Invalid URI: “-http://foo-bar.com/test/” rdf.core/uri (core.clj:16)[/raw]

[/cc]

Datatype Validation

In OWL, a datatype property is used to refer to literal values that belong to classes of literals (datatypes classes). A datatype class is a class that represents all the literals that belong to that class of literal values as defined by the datatype. For example, the *full-us-phone-number datatype we described above defines the class of all the literals that are full US phone numbers.

Validating the value of a property according to its datatype means that we make sure that the literal value(s) belong to that datatype. Most of the time, people will use the XSD datatypes. If custom datatypes are created, then they will be based on one of the XSD datatypes, and a regex pattern will be defined to specify how the literal should be constructed.

[cc lang=’lisp’ line_numbers=’false’]

[raw](defn validate-rdfs-range

[property values]

(do

; If the value is a map, then validate the “value”, “lang” and “datatype” assertions

(if (map? values)

(validate-map-properties values))

(doseq [[meta-key ranges] (seq (meta property))]

; make sure a range is defined for this property

(if (= meta-key #’ontologies.core/rdfs:range)

(let [ranges (if (vector? ranges)

ranges

^:intersection-of [ranges])]

(if (true? (:intersection-of (meta ranges)))

; consider that all the values of the range is a intersection-of

(doseq [range ranges]

(if (is-datatype-property? property)

; we are checking the range of a datatype property

; @TODO here we have to change that portion to call a function that will do the validation

; according to the existing XSD types, or any custom datatype based on these core

; XSD datatypes. Just like the DVT (Dataset Validation Tool)

;

; For now, we simply test using a datatype that has a pattern defined.

(let [pattern (get range #’ontologies.core/xsp:pattern)]

(if pattern

; a validation pattern has been defined for this value

(if (vector? values)

; Validate all the values of the property according to this Datatype

(doseq [v values]

(validate-range-pattern v pattern ranges))

; Validate the value according to the datatype

(validate-range-pattern values pattern ranges))))

; we are checking the range of an object property

(if (vector? values)

(doseq [v values]

(validate-range-object v range property))

(validate-range-object values range property))))

; consider that all the values of the range is an union-of

(println “@TODO Ranges union validation”)))))))

(defn- validate-range-pattern

[v pattern range]

(if (string? v)

(if (nil? (re-seq (java.util.regex.Pattern/compile pattern) v))

(throw (IllegalStateException.

(format “Value \”%s\” invalid according to the definition of the datatype \”%s\”” v range))))

(if (and (map? v) (nil? (validate-map-properties v)))

(if (nil? (re-seq (java.util.regex.Pattern/compile pattern) (get v ‘value)))

(throw (IllegalStateException.

(format “Value \”%s\” invalid according to the definition of the datatype \”%s\”” v range)))))))

(defn- validate-map-properties

[m]

(doseq [[p v] m]

(if (fn? @p)

(@p v))))

[/raw]

[/cc]

What this function does is to validate the range of a property. It checks what kind of values that exist for the input property according to the RDF/Clojure specification (is it a string, a map, an array, a var, etc.?). Then it checks if the property is an object property or a datatype property. If it is a datatype property, then it checks if a range has been defined for it. If it does, then it validates the value(s) according to the datatype defined in the range of the property.

Here is an example of a few records that have different datatype validation errors:

[cc lang=’lisp’ line_numbers=’false’]

[raw](def datatype-validation-test (r {uri “http://foo-bar.com/test/”

rdf:type owl:+thing

foo:phone “1-421-353-90573”

iron:pref-label {value “Test cardinality validation”

lang “en”

datatype xsd:*string}}))

(def datatype-validation-test-2 (r {uri “http://foo-bar.com/test/”

rdf:type owl:+thing

foo:phone “1-421-353-9057”

iron:pref-label {value “Test datatype validation”

lang “en”

datatype “not-a-datatype”}}))

(def xsd:*string-not-a-datatype)

(def datatype-validation-test-3 (r {uri “http://foo-bar.com/test/”

rdf:type owl:+thing

foo:phone “1-421-353-9057”

iron:pref-label {value “Test datatype validation”

lang “en”

datatype xsd:*string-not-a-datatype}}))

(def datatype-validation-test-4 (r {uri “http://foo-bar.com/test/”

rdf:type owl:+thing

foo:phone [{value “1-421-353-9057”

datatype xsd:string-not-a-datatype}]

iron:pref-label {value “Test datatype validation”

lang “en”

datatype xsd:string}}))[/raw]

[/cc]

[cc lang=’lisp’ line_numbers=’false’]

[raw]user> (validate-resource datatype-validation-test)

IllegalStateException Value “1-421-353-90573” invalid according to the definition of the datatype “[{#’ontologies.core/xsp:pattern “^[0-9]{1}-[0-9]{3}-[0-9]{3}-[0-9]{4}$”, #’rdf.core/uri “http://purl.org/ontology/foo#phone-number”, #’ontologies.core/rdf:type “http://www.w3.org/TR/rdf-schema#Datatype”}]” rdf.property/validate-range-pattern (property.clj:150)

user> (validate-resource datatype-validation-test-2)

IllegalStateException Provided value for datatype is not a datatype: “not-a-datatype” rdf.core/datatype (core.clj:31)

user> (validate-resource datatype-validation-test-3)

IllegalStateException Provided value for datatype is not a datatype: “#’dataset-test.core/xsd:*string-not-a-datatype” rdf.core/datatype (core.clj:30)

user> (validate-resource datatype-validation-test-4)

IllegalStateException Provided value for datatype is not a datatype: “#’dataset-test.core/xsd:*string-not-a-datatype” rdf.core/datatype (core.clj:30)

[/raw]

[/cc]

As you can see, the validate-rdfs-range is incomplete regarding datatype validation. I am still updating this function to make sure that we validate all the existing XSD datatypes. Then we have to better validate the custom datatypes to make sure that we consider their xsp:base type, etc. The code that should be created is similar to the one I created for the Data Validation Tool (which is written in PHP).

Range validation when the range is a class

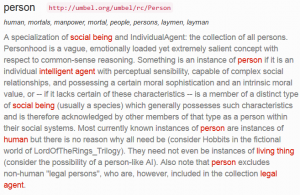

Finally, let’s shows how the range of an object property can be validated. Validating the range of an object property means that we make sure that the record referenced by the object property belongs to the class of the range of the property.

For example, consider a property foo:knows that has a range that specifies that all the values of foo:knows needs to belong to the class umbel-rc:+person. This means that all of the values defined for the foo:knows property for any record needs to refer to a record that is of type umbel-rc:+person. If it is not the case, then there is a validation error.

Here is an example of a record where the foo:knows property is not properly used:

[cc lang=’lisp’ line_numbers=’false’]

[raw](def wrench (r {uri “http://foo-bar.com/test/bob”

rdf:type umbel.ref/umbel-rc:+product

iron:pref-label “The biggest wrench ever”}))

(def object-range-validation-test (r {uri “http://foo-bar.com/test/bob”

rdf:type umbel.ref/umbel-rc:+person

foo:knows wrench

iron:pref-label {value “Test object range validation”

lang “en”

datatype xsd:*string}}))[/raw]

[/cc]

Remember we defined the foo:knows property with the range of umbel-rc:+person. However, in the example, the reference is to a wrench record that is of type umbel-rc:+product. Thus, we get a validation error:

[cc lang=’lisp’ line_numbers=’false’]

[raw]user> (validate-resource object-range-validation-test)

IllegalStateException The resource “http://umbel.org/umbel/rc/Product” referenced by the property “#’dataset-test.core/foo:knows” does not belong to the class “#’umbel.ref/umbel-rc:+person” as defined by the range of the property rdf.property/validate-range-object (property.clj:142)[/raw]

[/cc]

The function that validates the ranges of the object properties is defined as:

[cc lang=’lisp’ line_numbers=’false’]

[raw](defn- validate-range-object

[r range property]

(do (println range)

(let [r (if (var? r)

(deref r)

(if (map? r)

(r)

(if (string? r)

; @TODO get the resource’s description from a dataset index

({}))))

uri (get (deref (get r #’ontologies.core/rdf:type)) #’rdf.core/uri)

uri-ending (do (println uri) (if (> (.lastIndexOf uri “/”) -1)

(subs uri (inc (.lastIndexOf uri “/”)))

(str “”)))

super-classes (try

(read-string (:body (clj-http.client/get (str “http://umbel.org/ws/super-classes/” uri-ending)

{:headers {“Accept” “application/clojure”}

:throw-exceptions false})))

(catch Exception e

(eval nil)))

range-uri (get @range #’rdf.core/uri)]

(if-not (some #{range-uri} super-classes)

(throw (IllegalStateException. (str “The resource \”” uri “\” referenced by the property \”” property “\” does not belong to the class \”” range “\” as defined by the range of the property” )))))))[/raw]

[/cc]

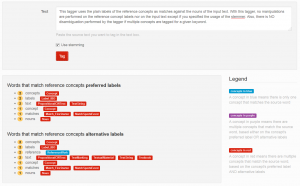

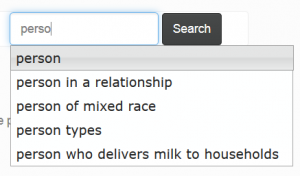

Normally, this kind validation should be done using the descriptions of the loaded ontologies. However, for the benefit of this blog post, I used a different way to perform this validation. I purposefully used some UMBEL Reference Concepts as the type of the records I described. Then the object range validation function leverages the UMBEL super-classes web service endpoint to check get the super-classes of a given class.

So what this function does is to check the type of the record(s) referenced by the foo:knows property. Then it checks the type of these record(s). What needs to be validated is whether the type(s) of the referenced record is the same, or is included, in the class defined in the range of the foo:knows property.

In our example, the range is #'umbel-rc:+person. This means that the foo:knows property can only refer to umbel-rc:+person records. In the example where we have a validation error, the type of the wrench record is umbel-rc:+product. What the validation function does is to get the list of all the super classes of the umbel-rc:+product class, and check if it is a sub-class of the umbel-rc:+person class. In this case, it is not, thus an error is thrown

What is interesting with this example is the UMBEL super-classes web service endpoint does return the list of super classes as Clojure code. Then we use the read-string function to evaluate the list before manipulating it as if it was part of the application’s code.

Conclusion

What is elegant with this kind RDF/Clojure serialization is that the validation of RDF data is the same as evaluating the underlying code (Data as Code). If the data is invalid, then exceptions are thrown and the validation process aborts.

One thing that I yet have to investigate with such a RDF/Clojure serialization is how the semantics of the properties, classes and datatypes could be embedded into the RDF/Clojure records such that we end up with stateful RDF records that embed their own semantic at a specific point in time. This leverage would mean that even if an ontology changes in the future, the records will still be valid according to the original ontology that was used to describe them at a specific point in time (when they got written, when they got emitted by a web service endpoint, etc.).

Also, as some of my readers pointed out with my previous blog post about this subject, the fact that I use vars to serialize the RDF triples means that the serialization won’t produce valid ClojureScript code since vars doesn’t exists in ClojureScript. Paul Gearon was proposing to use keywords as the key instead of vars. Then to get the same effect as with the vars, to use a lookup index to call the functions. This avenue will be investigated as well and should be the topic of a future blog post about this RDF/Clojure serialization.